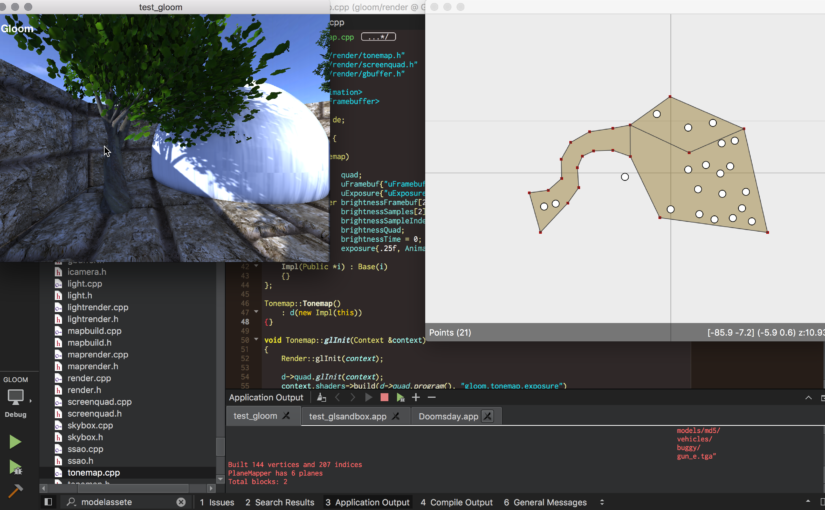

This is the final installment of a short series of posts detailing what I’ve learned while exploring the possible directions that a redesigned Doomsday renderer could take. This post is about integrating the new renderer into the existing engine.

Tag: illumination

Further rendering explorations – Part 2

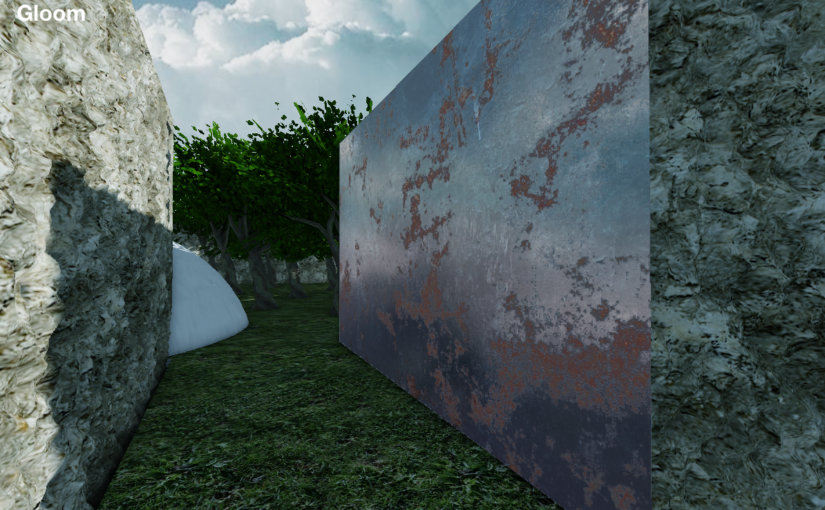

During the spring I’ve been exploring the possibilities and potential directions that a completely redesigned renderer could take. Continuing from part one, this post contains more of the results and related thoughts about where things could and should be heading.

Further rendering explorations – Part 1

When it comes to graphics, things sure have changed since the beginning of the project. Back in the day — almost 20 (!) years ago — GPUs were relatively slow, you had a few MBs of VRAM, and screen resolutions were in the 1K range. Nowadays most of these metrics have grown by an order of magnitude or two and the optimal way of using the GPU has changed. While CPUs have gotten significantly faster over the years, GPU performance has dramatically increased in vast leaps and bounds. Today, a renderer needs to be designed around feeding the GPU and allowing it do its thing as independently as possible.

With so much computation power available, rendering techniques and algorithms are allowed to be much more complex. However, Doomsday’s needs are pretty specific — what is the correct approach to take here? During the spring I’ve been exploring the possibilities and potential directions that a completely redesigned renderer could take. In this post, I’ll share some of the results and related thoughts about where things could and should be heading.